Learning Kubernetes for fun and profit

Building a Raspberry Pi Cluster From Scratch

The winter holidays are coming up. If you ever get tired of baking gingerbread cookies, I have a suggestion for a fun project. Perhaps you’re stuck at home anyway because of grisly weather or because of yet another Covid lockdown. In that case, consider buying a Raspberry Pi 4 single board computer for a cool new project. The number of things you can build with these is amazing. How about a robot rover, a weather station, a media server, or a security system, for example? I went a bit further and bought four Raspberry Pis to hook them up together as a cluster. The objective was to gain some hands-on experience with Kubernetes. If you are interested in this sort of thing, I am providing essential hints and links in this article on how to get this done. It is not a manual with detailed instructions (there are already a number of those on the Internet), but rather an overview that covers all the required steps to get from zero to a functioning Kubernetes cluster. Before we get into it, here is the what, how and why:

- What is this about?

A small server system running a cloud platform aka container orchestration system identical to those operated in current data centres. Ninja devops skills. - How long does it take to set up?

About 16 hours if you’re doing it for the first time. - How much does it cost?

About 425 USD. Possibly less if you already have some parts. - Isn’t this just a toy?

While you can’t run an actual data centre with this, the latest Raspberry Pi is more powerful than you might think. It offers an excellent computing power per dollar ratio and it is sufficient for experimental and/or low-traffic projects. - Is there any soldering involved?

Nope. - Why Kubernetes?

Because it is the leading container orchestration platform. Docker and Kubernetes have been taking over the (computing) world in the past few years. - Couldn’t I just install minikube?

Of course. But that is just half the fun and half the possibilities.

The first part of setting up a Pi cluster is to obtain and install the hardware. Obviously, the most important component is the Raspberry Pi 4 computer itself. You need at least three or four of them. Four is better if you want to do HA (high-availability) installations. The Pi comes with different memory options. For Kubernetes, I’d recommend the 8GB version, because running a bunch of containers on the cluster is memory-intensive. It is possible to build a cluster with older Pi models, but the latest model with the highest memory option is suited best. The Raspberry Pi 4 has a 64-bit quadcore ARM CPU. This is important to remember when installing the software later. For power, you have two options. Each Pi can be powered either via USB-C or via Ethernet. For the latter you need a POE switch and more importantly one POE hat for each Pi. Since the POE hat component costs around 18 USD, I went for the cheaper USB multiport charger. The charger should be dimensioned for a peak consumption of 5.5W per Pi, so if you have four Pis, it should supply at least 22W. More is safer. During normal operation, the Pis consume only about 3W each. Additionally, you need one micro SD card for each Pi as mass storage. While the storage requirements for the OS are low, running Kubernetes means that we have container images to take care of and eventually we want to create a few volumes for our applications. Therefore, I would recommend to use at least 64GB for each Pi. Finally, you need some sort of case or mount. I used a cheap acrylic stack case which can hold small cooling fans for each Pi.

Part list

| Description | Number | Unit Price | Price |

|---|---|---|---|

| Raspberry Pi 4B (8GB) | 4 | $75 | $300 |

| SD Card 64GB | 4 | $15 | $60 |

| Ethernet Patch Cable (Cat6) | 4 | $2 | $8 |

| USB-C Cable | 4 | $1 | $4 |

| Case/Mount | 1 | $10 | $10 |

| Cooling Fan | 4 | $1.50 | $6 |

| Unmanaged 6-port Switch | 1 | $25 | $25 |

| Multi USB Charger 30W | 1 | $12 | $12 |

| Total | $425 |

The assembly is quite simple and there are many videos on Youtube that show how it’s done, such as this one. Once the hardware is assembled, the operating system must be installed on each of the micro SD cards. I would recommend the latest Ubuntu Server version, because it has a simple and quick installation procedure and already comes with some of the software needed for running a Kubernetes cluster. However, any other Linux distro with support for the 64-bit ARMv8 architecture will do. I used the Raspberry Pi Imager utility for flashing the micro SD cards. Once that is done, the cards can be inserted into the Raspberry boards’ slots. At this point, you should also have wired up the Ethernet and connected the switch to your router. While it is possible to provision Ubuntu server installations with Ubunutu’s MAAS system, I found it easier to run the installation on each of the four Pis while temporarily connection a monitor using to the Raspberry’s HDMI port. For a large number of computers MAAS may be more efficient. After finishing the OS installations, you can run the Pis in headless mode via SSH, as the SSH server is already included in Ubuntu Server.

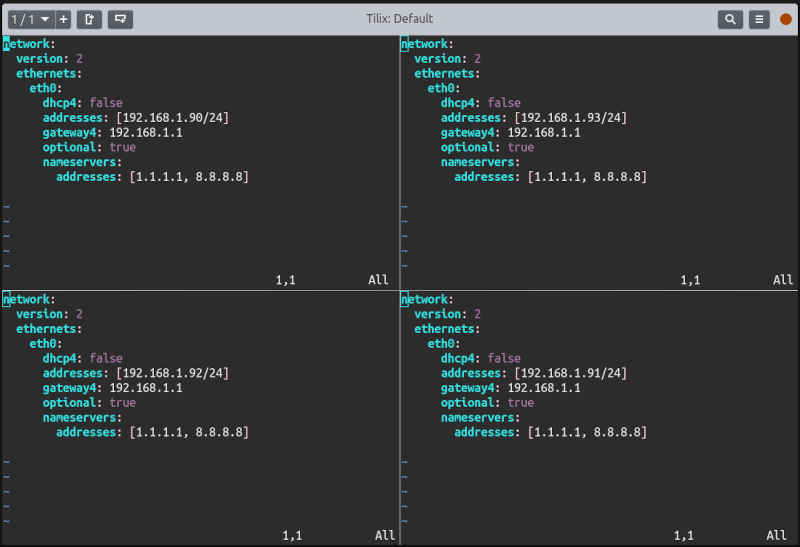

From this point on, it is beneficial to use a terminal emulator that can sync command input to multiple windows or tiles. This will save you a significant amount of typing, as you can execute commands on all Pis simultaneously instead of repeating each step on each computer. I am using Tilix for this purpose. For example, you could use this technique to perform the next step, which is to assign a static IP address to each of the computers. There are two ways of accomplishing this, either by having your router do it via DHCP reservation to MAC addresses, or by setting up the IPs directly on each computer. The procedure is described here in detail. Finally, you may want to assign host names of your choosing to the devices, and configure the shell in your preferred way. After that, you are done with the bare hardware and OS setup, and you can move on to installing Docker and Kubernetes (k8s). It’s worth mentioning that there are different ways of setting up Kubernetes. For example, Ubuntu offers microk8s, a stripped-down snap-based distribution of Kubernetes for local and small device deployments. I haven’t tried microk8s, so the rest of the article describes how to set up standard Kubernetes, which is at version 1.19 at the time of this writing.

Before you can move on to installing Kubernetes, you must first install Docker onto each of the Raspberry Pis using the Ubuntu package manager. You also have to adjust the Linux cgroup and allow iptables to see bridged network traffic. These steps are described in detail in this excellent article by Chris Collins, which you can follow from start to end, although the last step in this article, “validate the cluster” is optional. The Kubernetes installation itself consists of five phases: (1) installing the k8s packages, (2) configuring the CNI and CIDR for Kubernetes networking, (3) initialising the control plane, (4) installing the network addon, and (5) joining all nodes to the cluster. The suggestions in the said article are sensible and should work in most home network environments. Make sure that you are using the latest available packages in each case, which may differ from the versions cited in the article. If you decide to use another network addon instead of the suggested Flannel addon, check the compatibility with MetalLB first (see below). Canal, Cilium and Romana are safe choices too, yet the mentioned Flannel addon is a low-overhead plugin that works just fine.

At this point you should have a working Kubernetes cluster running on the Raspberry Pis. Let’s make sure that you can administer it easily from your workstation. The command-line tool for accessing and administering k8s cluster is called kubectl. Its installation is described here. It requires you to move the configuration file from /etc/kubernetes/admin.conf on the cluster to ~/.kube/config on your workstation. I would also recommend to install the kubens and kubectx command-line tools in addition. These allow easy switching between different clusters and different cluster namespaces. If you prefer to interact with Kubernetes through a GUI, install Lens. The Lens IDE has many useful features, such as graphical display of cluster resources and stats. Note that in order to display these, you have to install Prometheus on the cluster for collecting metrics using Lens. Unfortunately, this does not work out-of-the-box as the kube-state-metrics deployment does not support the ARM64 architecture. This problem can be fixed by changing the container image and it just so happens that there is another article by Chris Collins which describes exactly how this is done. If that seems too much trouble, you might want to use the Kubernetes Dashboard web-based UI instead. It is straightforward to install. However, in order to access it from your local machine, you need to create an access token and connect to the cluster via kubectl proxy.

You are now ready to create new deployments and services. You can also install any Helm charts on the Kubernetes cluster. Basically, you are all set. But if you want these applications to talk to the network outside Kubernetes, your options are somewhat limited. Currently you can only use services of type NodePort to expose them to the outside world. This means you can access applications via port addresses on your Raspberry Pi cluster nodes. The inconvenience of this method could be remedied by installing an external reverse proxy / node balancer (such as HAProxy) and configuring it with a bunch of NodePorts. A better solution may be to install a Kubernetes-internal load balancer. This enables you to expose services with the LoadBalancer service type and access them via dedicated IP addresses and/or hosts from the outside. That is where MetalLB comes in. The installation of MetalLB is simple, as it supports multiple architectures. MetalLB can be configured in two ways: in layer 2 mode or in border gateway protocol (BGP) mode. I would recommend the former. In that case, MetalLB is assigned a dedicated block of IP addresses on your network which it hands out as virtual IPs to configured services. Alternatively, services can be exposed with a Kubernetes Ingress. While this works only for HTTP/HTTPS services (meaning port 80 and 443), you can create ingresses for as many services as you wish using dedicated hosts and/or vhosts. Ingresses are the preferred way to expose web services and protect them with TLS. It requires the installation of yet another component, a so-called ingress controller.

So, let’s install an ingress controller. There are again several choices, namely Nginx, HAProxy, Traefik and others. I used Nginx, which is based on the web server and reverse proxy server of the same name. This time, you can simplify the installation process by using a Helm chart for the installation of the Nginx ingress controller. I would recommend using the mentioned Lens UI for installing Helm charts, but you can also do it by command-line. The important thing is that you need to supply an ARM64 Docker image for Nginx instead of the default image which only supports x86 architecture. To achieve this, the Nginx image has to be changed from k8s.gcr.io/ingress-nginx/controller to docker.io/kubeimages/nginx-ingress-controller in the YAML manifeest. Check your version of the Helm chart; it may be possible to supply the image name via the –set key=value mechanism. Once the ingress controller is up and running, we only have to add the icing on the cake, which is an automation of provisioning volumes (disk space) to containers in Kubernetes. This can be achieved by installing OpenEBS, a storage manager for Kubernetes. OpenEBS frees you from having to supply persistent volumes manually to Kubernetes deployments. Once again, this is best done via Helm package manager, and in this case it’s just a one-liner since OpenEBS supports ARM64 out-of-the-box. The very last thing to do is then to define a default storage class in Kubernetes, so OpenEBS can actually do its job. This is accomplished with the following command:

1 | kubectl -n [openebs_namespace ] patch storageclass openebs-hostpath -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'; |

The openebs-hostpath storage class creates local volumes for persistent volume claims on the nodes where and when they are required. Voilà - your k8s cluster is ready.